Scraped market signals are everywhere, and today’s business moves fast. We notice a price drop, a new product ranking, or a stock-out event. Usually, that signal gets trapped in a spreadsheet or a chat thread.

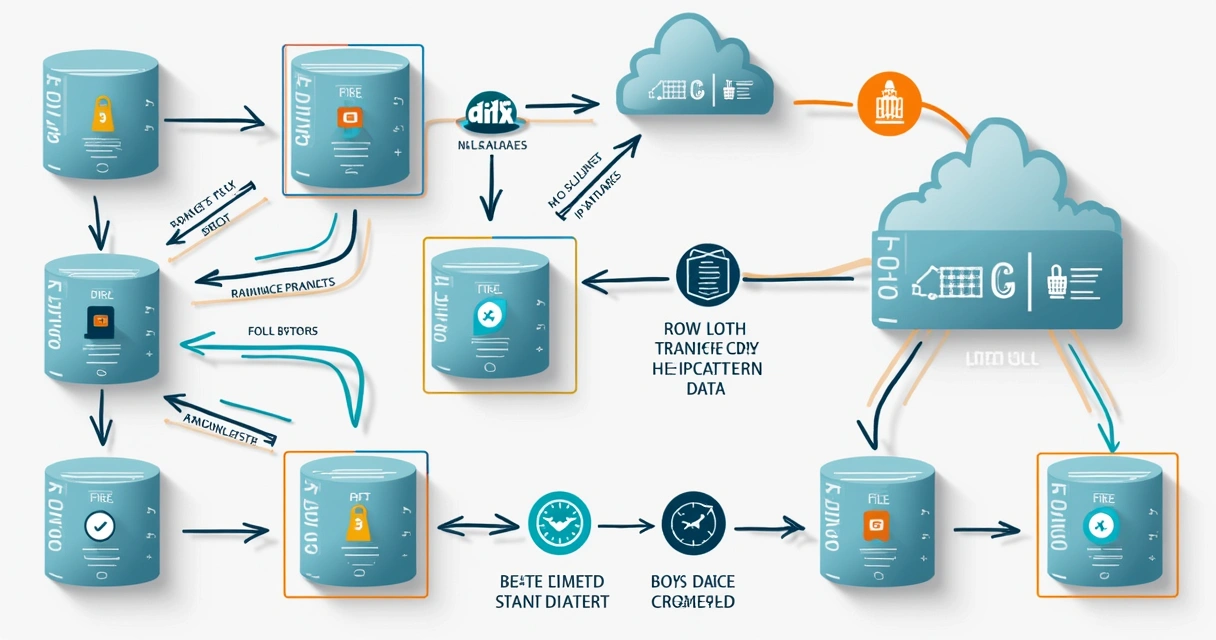

But in our work at EWS Limited, we have seen that the real value only shows when teams turn those signals into coordinated action—and do it quickly. This is the idea behind a sync-first pipeline: every market signal becomes a structured work item, with a clear owner, routed to the right people, and tracked across tools—Jira, ServiceNow, Zendesk, GitHub, and more.

Fast action on signals beats high volume with no follow-through.

In this guide, we will show you step by step how syncing scraped market signals can give you an edge, how to prevent signals from getting lost, and how to turn new market data into actual changes. We’ll draw lessons from our experience helping Series B and C companies, established IT teams, global mobility managers and partner management squads—as well as tools like Exalate, which has shaped how teams sync, map, and act on data. And just as we advise at EWS Limited, we will focus every step on acting faster—with less noise and fewer missed signals.

Scraped market data powers critical tasks—but let’s be honest, the current workflow gets in its own way. Say your crawler detects a competitor price gap, a dropped product ranking, or a negative review. The typical workflow looks something like this:

We have seen this in every fast-growing company, from recruiting team overflow to global mobility gaps (if you want to learn more about workforce automation, you might want to read about payroll automation for global workforce efficiency).

In our experience, the big leap forward comes from moving to a sync-first pipeline. This means:

We have partnered with companies who get the most out of two-way sync and real-time issue updates, using Exalate to connect teams across national borders and departments. For these teams, each market signal fits a specific work type:

Each ticket is mapped, scored, and routed by a script or by Exalate’s AI co-pilot—so only real, actionable work enters the team’s backlog.

Signals should lead to changes, not just alerts.

It’s tempting to launch your scrapers and collect everything, but we’ve watched companies regret this. Instead, define a tight data schema in advance. This controls noise and improves trust. The data contract answers:

We advise using primary keys that cannot shift unexpectedly (as simple as SKU plus exact domain or listing ID), and using clear, human-readable timestamps. Before running large jobs, we model a few flows, so when the first signal appears, the work item is ready for sync—resource-saving and focused.

Collection is not just about the data—it is also about staying out of trouble. As studies from PubMed-indexed honeypot research have shown, automated traffic now makes up 47.4% of internet activity, with most bots blending routine automation and customized behaviors. Bot detection systems often use aggressive rate-limits, pattern-blocks, and fingerprinting. According to studies from MIT Sloan, general-purpose bot detections can still be error-prone and can even block legitimate fetches if not carefully managed.

To avoid being blocked and to maintain sustainable access, we advise putting in place the following guardrails:

Bot pattern analysis proves that structure and flexibility both matter—static scrapers get blocked, while too much randomness leads to drift and errors.

Bot pattern analysis proves that structure and flexibility both matter—static scrapers get blocked, while too much randomness leads to drift and errors.

At EWS Limited, we help teams maintain just enough variation and traceability—adjusting with every change in bot response or pattern recognition.

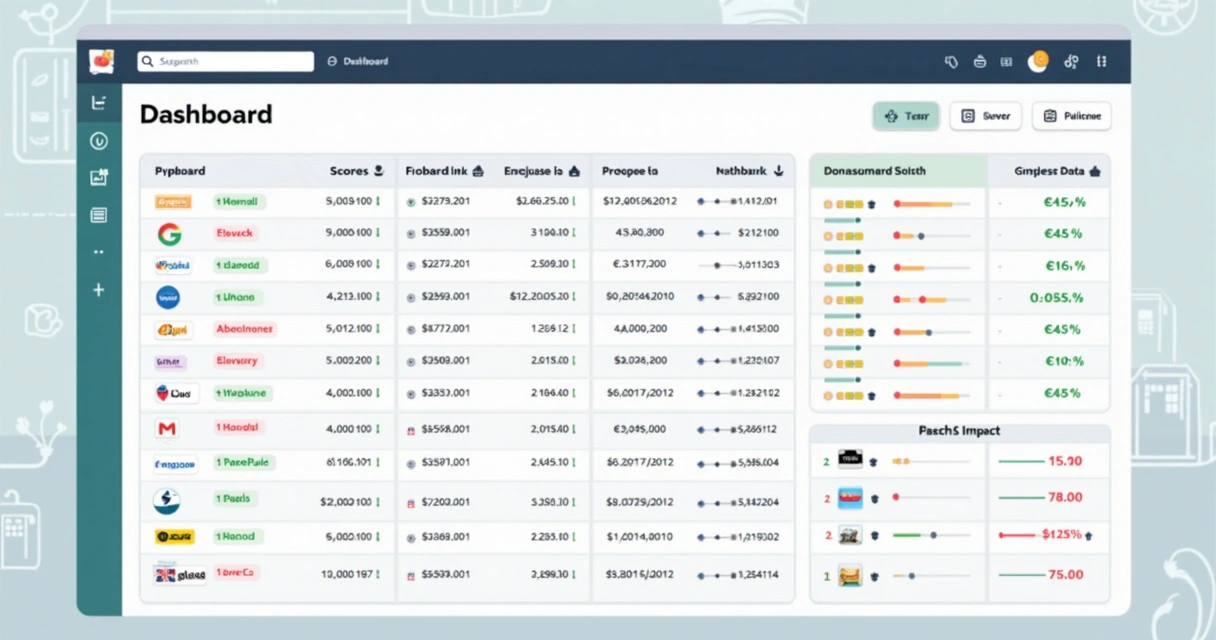

Once data arrives, matching units, currencies, and locales is a classic hurdle. Price data in Yen, Euros, and USD—different date formats and decimals—can mean errors if sent directly to a global team.

Here’s how we’ve seen the best teams approach normalization and scoring:

By normalizing and scoring first, only real issues hit the ticket queue. This has helped our clients reduce noise, focus on changes that matter, and avoid task overload. It is also an approach EWS Limited applies in both global hiring data and workforce management, as seen in our guide to EOR-enabled hiring.

By normalizing and scoring first, only real issues hit the ticket queue. This has helped our clients reduce noise, focus on changes that matter, and avoid task overload. It is also an approach EWS Limited applies in both global hiring data and workforce management, as seen in our guide to EOR-enabled hiring.

Once we know the market signal is trustworthy and impactful, it is time to create a work ticket. We have found the following steps make this process work across teams and platforms:

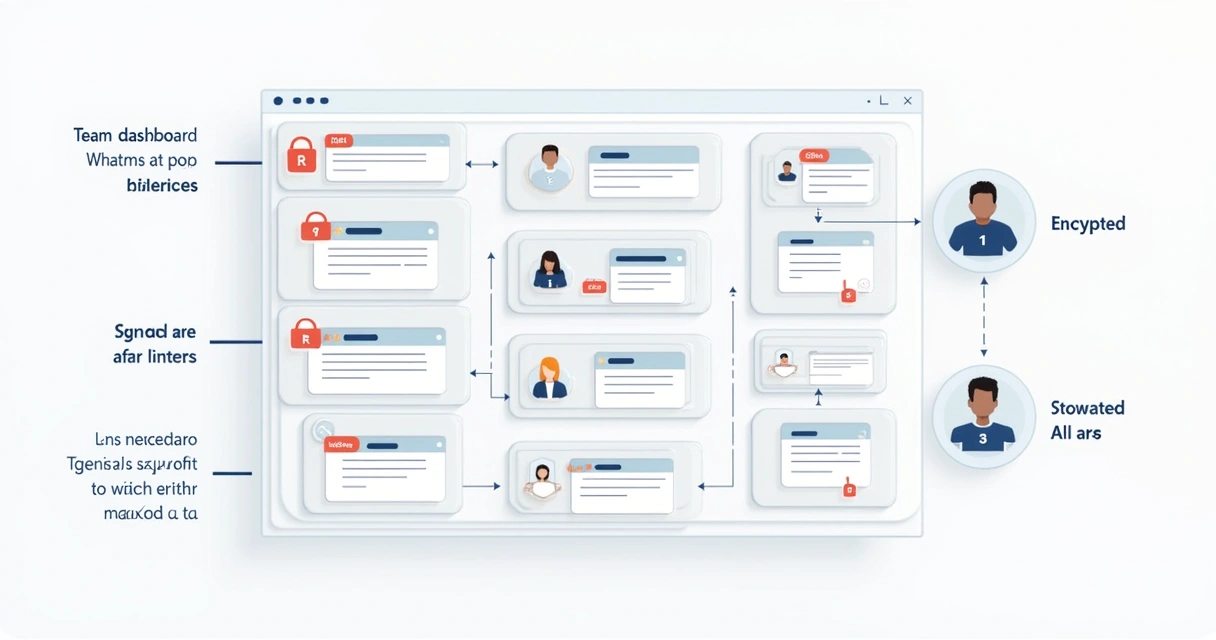

When we build these flows, scripting (or using an AI co-pilot) helps map edge cases and add logic. Exalate is a great example of this—teams can route and tune flows for edge cases, regional policy, or custom approval steps, without losing traceability or breaking privacy requirements.

One issue, one owner, one result—across every tool your team already knows.

This approach means pricing teams never get bugs, engineers don’t see out-of-stock events, and support teams don’t miss urgent brand risks.

Security is as important as speed. Scraped data can include credentials, emails, product info, or even user data, so strong security and audit are never optional. In our consultancy, we guide clients to:

This gives leadership and IT teams the trust to expand syncs and to bring more teams into the loop. For those facing compliance-heavy environments or operating in sensitive markets, this step unlocks cross-team sync and speed without tradeoffs.

This gives leadership and IT teams the trust to expand syncs and to bring more teams into the loop. For those facing compliance-heavy environments or operating in sensitive markets, this step unlocks cross-team sync and speed without tradeoffs.

A common pitfall is to celebrate growing the amount of data or the number of alerts. In our view, and based on years of experience at EWS Limited, the only thing that matters is solving problems faster—with fewer misses and less manual work.

AI in HR: How Machine Learning Is Reshaping Recruting

Spain’s Workforce Crisis: Why Migration Is Now Essential

How to Complete IRS W-8 BEN for Foreign Contractors: A Simple Guide

Exempt vs. Non-Exempt Employees: 2026 Guide for Employers

Work From Home Stipends: What They Cover and How They Work

Small Business Benefits: 8 Strategies for Rising Costs in 2026

Payroll Tax Forms in 2026: A Simple Guide for Growing Businesses

How to Build Strong Company Values That Shape Workplace Culture

EU Pay Transparency Directive 2026: A Step-by-Step Compliance Guide

AI Data Center Hotspots: Skilled Trade Wages, Growth, and Trends

Ad Hoc IT vs MSPs vs Platforms: Choosing the Right IT Approach

Misclassifying Contractors vs Employees: Risks, Penalties, Solutions

Hiring in Morocco: North Africa’s Emerging Tech Talent Market

Hiring in Portugal: What You Need to Know in 2026

Global Mobility and EOR: How to Align HR and Immigration in 2026

Beyond China: Where Asian Companies Are Hiring in 2026

How Recruiters Use EOR to Win Big in Fintech Placements

Europe’s Freelancer Laws in 2026: When to Switch to EOR

Hiring in the Philippines: 2026 Guide for Global Employers

How Recruiters Use EOR to Unlock German Healthcare Placements

Hiring in Colombia: 2026 Compliance Guide for LatAm Expansion

Hiring in Türkiye (Turkey): 2026 Guide for International Teams

How Recruiters Use EOR to Handle Rapid Global Onboarding

Top 5 EOR Red Flags to Avoid in 2026

EOR vs Entity Setup in 2026: What Startups Need to Know

10 Things You Didn’t Know Your EOR Could Do

When to Use a Payroll Provider vs Full EOR in Global Hiring

January Compliance Watch: What’s Changing in APAC Labor Laws

Hiring in Turkey in 2026: Costs, Contracts, and EOR Options

Contractor or Employee? Compliance Risks to Watch in 2026

What’s Changing in European Payroll Compliance in 2026?

How to Use EOR to Win More Government or Public Sector RFPs

Hiring in Colombia: Fast-Growing Talent Pool, Low Total Cost

Remote Tech Jobs Surge 33% in Ireland: Skills You Need Now

Remote Work in APAC: 7 Data-Driven Trends for 2026 Expansion

Remote Payroll Headaches: 7 Mistakes HR Teams Make

Freelancer vs EOR in 2026: Cost, Risk, and Speed Compared

How Employee Experience Drives Retention and Business Growth

How HR Can Reduce Burnout Amid AI, Budget Cuts, and Change

Employment Contracts: What HR and Global Managers Must Know

PEO Explained: How It Simplifies Global Workforce Management

Global Mobility: A Complete Guide for HR and Global Managers

Remote Workforce: A Complete Guide to Managing Global Teams

Workforce Planning: A Step-by-Step Guide to Strategic Hiring

How Recruiters Use EOR to Increase Revenue Per Client

The Secret to Winning Global RFPs? A Strong EOR Partner

Top 7 Hiring Trends Shaping Global Teams in 2026

Hiring in Türkiye: Key Labor Laws and Employer Risks in 2026

GCC Hiring Compliance Update: What’s Changing in 2026

How to Hire in Turkey in 2026: A Strategic EOR Guide

Why modern recruitment agencies outsource compliance to EOR partners

How adding an EOR partner helps agencies win more RFPs

EOR Opportunities in Poland: Why It’s Europe’s Talent Powerhouse

Cross-Border Hiring Trends for 2026: Insights for Global Recruiters

How to Build a Scalable Payroll Strategy Across MENA

Contractor vs Employee in Germany: What’s the Risk in 2026?

“Place globally, bill locally” — the new recruiter cheat code

Top 5 Compliance Mistakes When Expanding to the UAE

Why EOR is Key to Winning Public Sector Tenders in Europe

Growth formula for agencies using EOR to expand key accounts

How EOR helps recruiters stay ahead of fast-changing GCC compliance

Employer of Record in Mandarin: What is 境外雇主服务?

How to Use an EOR for Temporary Projects (中国公司如何为短期海外项目使用EOR服务)

Why “Go Global” Must Include Compliance (“走出去”战略中的合规盲点)

中资企业如何选择欧洲EOR供应商?(How to Choose the Right EOR Partner in Europe)

与当地政府打交道:中国公司需要了解的合规礼仪 (Cultural Compliance for Chinese Firms)

中国公司海外人力结构案例分析:制造业、科技与能源 (HR Case Studies: Chinese Firms Abroad)

How Guanxi Influences Hiring in the Middle East (关系在中东招聘中的作用)

Top 5 Risks When Hiring in the Gulf (中国企业在海湾地区招聘的五大风险)

Managing Compliance in Multi-country Projects (中国企业多国项目的人力合规管理)

The $100K Visa Shock: Why Global Hiring Just Replaced the H-1B

How to Set Up Payroll For Hpc And Ai Teams

Contracting Machine Learning Talent Abroad

Everything on Hiring Foreign Phds In German Tech Labs

Cross-Border Ip Protection In R&D Teams

How To Classify Freelancers In Tech Innovation

How Eor Helps Tech Firms Legally Hire In Germany

Dual Contract Structure For International Researchers

Data Protection Obligations For Remote Tech Staff

Germany Research Visa Vs Skilled Worker Visa

Everything on Nis2 Directive Compliance For Eu Tech Workers

Global Mobility For Deep Tech Startups In Germany

Payroll For EU Embedded Systems Developers

Relocation Support For Semiconductor Experts on EU

The Absolute Way to Hire Ai Engineers In Germany

How to Manage Benefits For German Tech Hires

Germany’S Blue Card Process For Engineers

Everything on Germany R&D Employment Compliance

Remote Hiring Of Cybersecurity Analysts In Eu

Visa Pathways For Quantum Computing Researchers

Onboarding Robotics Specialists Across EU Borders

Workforce Planning In Ai-Driven Logistics And Infrastructure

Visa Processing For High-Tech Infrastructure Staff

Managing Global Mobility In Sustainable City Projects

Cross-Border Team Management In Saudi Data Centers

Hiring Skilled Labor For Green Hydrogen Facilities

Digital Twin Technology Hiring Trends In Saudi Construction

Employer Obligations In Public-Private Energy Initiatives

Navigating Local Labor Laws For Solar Energy Teams

Talent Acquisition In The Saudi Mining Sector

Eor Solutions For Ai Engineers In Mega Projects

Regulatory Challenges In Hiring For Giga Construction Projects

Contractor Compliance In Smart City Developments

Classification Of Engineering Consultants In Vision 2030 Projects

How To Manage Workforce For Neom-Based Tech Projects

Eor For Multinational Mining Firms Operating In Saudi Arabia

Employer Of Record For Wind Energy Projects In The Gulf

Relocation Logistics For International Clean Energy Experts

Hiring Strategies For Large-Scale Construction Projects In Ksa

How To Onboard Digital Infrastructure Experts In Saudi Arabia

Payroll Setup For Renewable Energy Workers In Ksa

Strategic Relocation To Riyadh Or Doha: A Guide for Global Employers

Work Visa Processing In Qatar And Saudi Arabia

Qatar Nationalization Policy And Foreign Firms

Cost Of Setting Up A Business In Qatar: A Guide for Global Employers

Saudi Labor Court And Dispute Handling for Global Employers

Cross-Border Payroll For Ksa And Qatar Teams

End Of Service Benefits Saudi Arabia: A Guide for Global Employers

How To Manage Expat Benefits In Qatar for Global Employers

Expanding Into New Markets: Vendor Risks You Should Flag

A Guide to Cross-Border Equity Vesting for Tech Startups

Employer Branding for Multinational Teams: What Works Now

What Global C-Level Leaders Miss About Digital Nomad Visas

Succession Planning for Distributed Teams: A Practical Guide

Relocation Budgeting For Global Tech Firms

Latam Hiring Strategy: What Global Companies Should Know

Risk Of Permanent Establishment Explained

Managing Intellectual Property In Remote Work

Benefits Benchmarking Globally for Global Companies

How to Benchmark Compensation Across 100+ Countries in 2025

Checklist: Preparing HRIS for Fast International Scalability

Biometric Data in Global Payroll: Legal Boundaries Explained

8 Regulatory Updates Impacting Global HR in 2025

What are Hidden Costs of In-House Payroll?

Why Companies are Thinking Differently About Relocation

Is Your Global Mobility Program Outgrowing Spreadsheets?

Remote Work Visas: A Growing Trend in Global Mobility

Hiring in Europe Post-Brexit: What You Need to Know

Tips for Managing Multi-Time Zone Teams Successfully

Relocation Packages: What Top Talent Expects in 2025

Banking and Payroll Challenges in Saudi Arabia Markets

The Legal Risks of Misclassifying Global Workers

Why Scalability Should Drive Your Global HR Strategy

How EWS Streamlines Global Mobility for Tech Talent

Lithuania – Employer of Record

Kosovo – Employer of Record

Finland – Employer of Record

Namibia – Employer of Record

Nepal – Employer of Record

Spain – Employer of Record

Latvia – Employer of Record

Ireland – Employer of Record

Cyprus – Employer of Record

Czech Republic – Employer of Record

Italy – Employer of Record

Indonesia – Employer of Record

South Africa – Employer of Record

Tunisia – Employer of Record

Bosnia – Employer of Record

Moldova – Employer of Record

Five Tips For Improving Employee Engagement

Netherlands – Employer of Record

Germany – Employer of Record

France – Employer of Record

Portugal – Employer of Record

Bulgaria – Employer of Record

Austria – Employer of Record

Hungary – Employer of Record

Slovenia – Employer of Record

INCLUSIVITY IN THE TEAM MAKES EVERYONE WIN

Thailand – Employer of Record

Sri Lanka – Employer of Record

The Significance of an Employer of Record

Greece – Employer of Record

Mexico – Employer of Record

4 Reasons to Outsource Your Payroll

Five Recruitment Trends 2023

Malaysia – Employer of Record

Skill-Based Hiring and Benefits

Malta – Employer of Record

How To Practice Inclusive Recruitment

Israel – Employer of Record

Macedonia – Employer of Record

Jordan – Employer of Record

Macau – Employer of Record

Peru – Employer of Record

The Importance of Employer Branding

Bahrain – Employer of Record

South Korea – Employer of Record

Recruiting during a recession

Philippines – Employer of Record

USA – Employer of Record

Japan – Employer of Record

How To Setup A Business in 2023

Norway – Employer of Record

Managing Overseas Projects In 2023

Reason Of Expanding Your Workforce Globally

Croatia – Employer of Record

Colombia – Employer of Record

5 Ways To Speed Up Your Hiring Process

Egypt – Employer of Record

3 Ways To Streamline An Interview Process

Russia – Employer of Record

Saudi Arabia – Employer of Record

Hong Kong – Employer of Record

An Effective Hybrid Work Model

Turkey – Employer of Record

UAE – Employer of Record

Pakistan – Employer of Record

7 Things to Consider Before Accepting a Job

Kazakhstan – Employer of Record

3 Reasons to Encourage Employees to Generate Employer Brand Content

Denmark – Employer of Record

Sweden – Employer of Record

Bangladesh – Employer of Record

Kuwait – Employer of Record

How To Hire In The Age Of Hybrid Working

Australia – Employer of Record

Oman – Employer of Record

Qatar – Employer of Record

Ukraine – Employer of Record

Diversity – A Vital Hiring Strategy

Owning Every Moment of Your Hiring Experience

Serbia – Employer of Record

Maldives – Employer of Record

India – Employer of Record

Argentina – Employer of Record

Uzbekistan – Employer of Record

Belarus – Employer of Record

Brazil – Employer of Record

Chile – Employer of Record

Armenia – Employer of Record

3 Steps To Company Formation In The UK & Abroad

Romania – Employer of Record

Canada – Employer of Record

Morocco – Employer of Record